Key takeaways

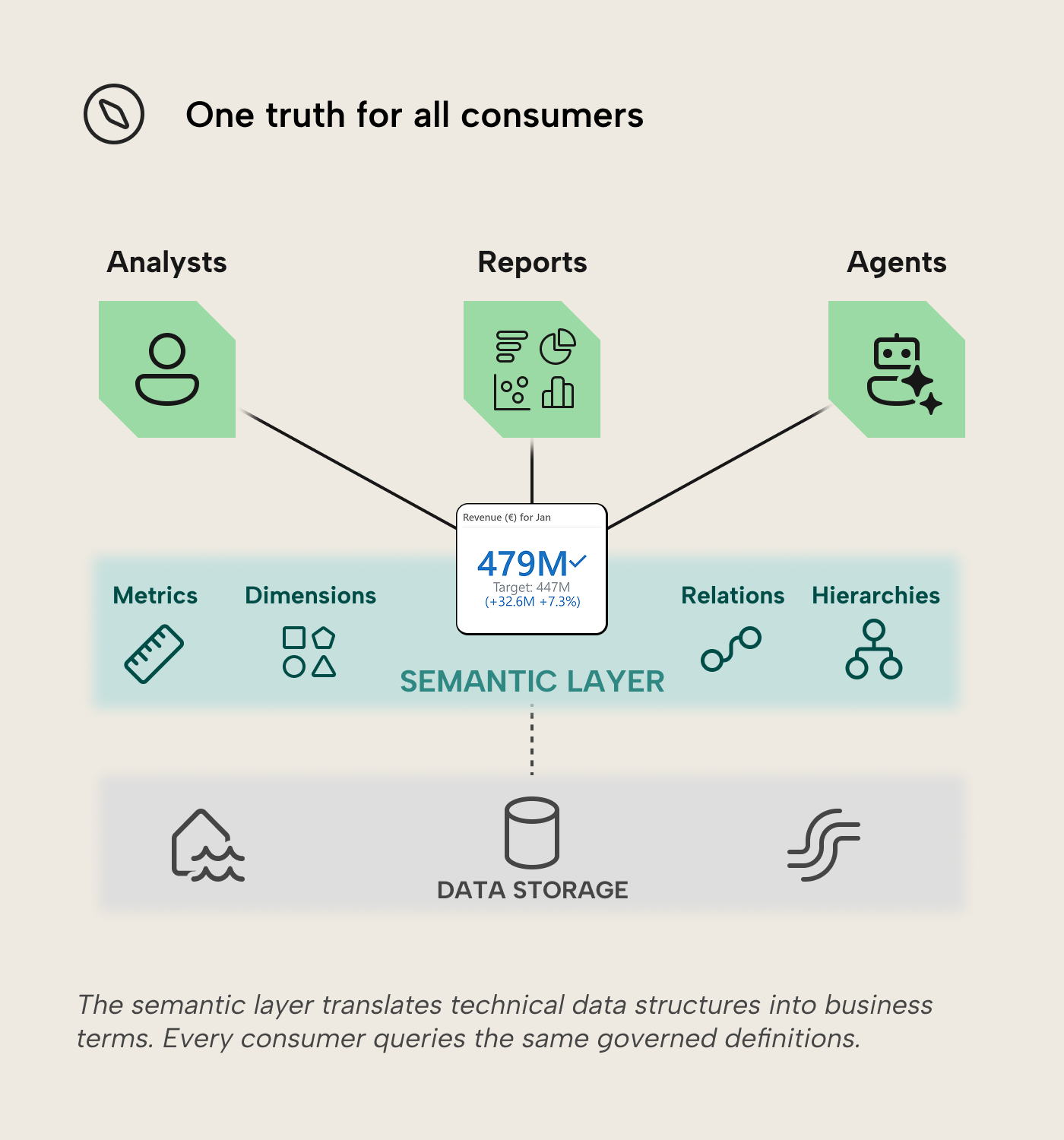

- A semantic layer translates raw data into business terms. It sits between your data storage and your analytics tools, providing consistent definitions for metrics, dimensions, and business logic—defined once, reused everywhere.

- Every major data platform implements the concept differently. Microsoft calls it a “semantic model”; Databricks embeds it in Unity Catalog as a Metric View; Tableau centralizes it with Tableau Semantics; and Palantir builds it into its Ontology. The architecture varies, but the purpose is the same.

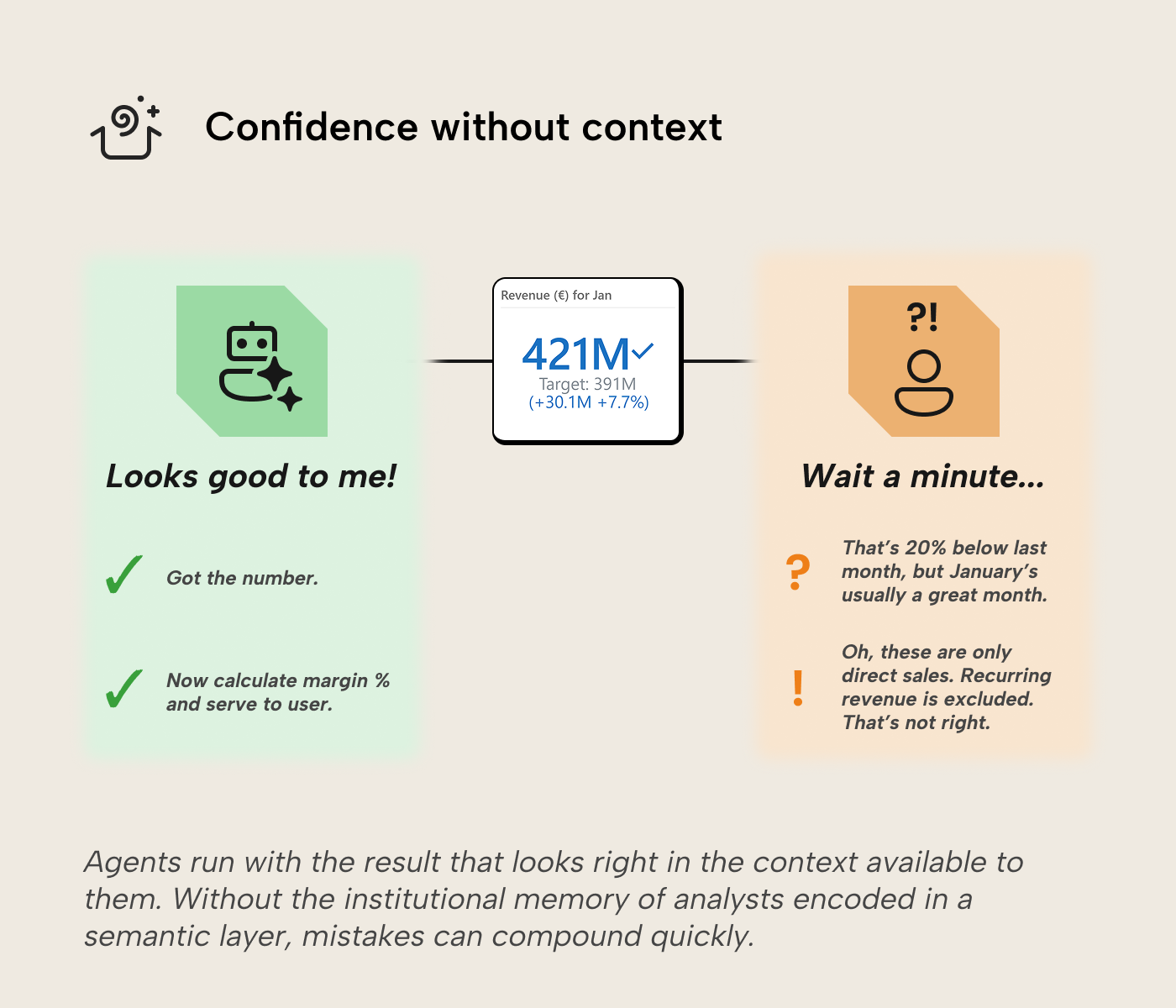

- A semantic layer serves as a concrete form of institutional memory. It captures business logic and knowledge of how to derive meaning from data in a form that would otherwise be implicit in experienced analysts’ heads. Without it, every consumer—and especially AI agents, which have no memory of their own—computes confidently from whatever definition it finds first.

This summary is produced by the author, and not by AI.

With thanks to Greg Baldini for his contributions to this article.

Every data platform has a name for it—semantic model, metric view, ontology—but the concept is the same: a shared layer that defines what business terms mean and makes those definitions available to reports, spreadsheets, APIs, and increasingly, AI agents.

Why have a semantic layer?

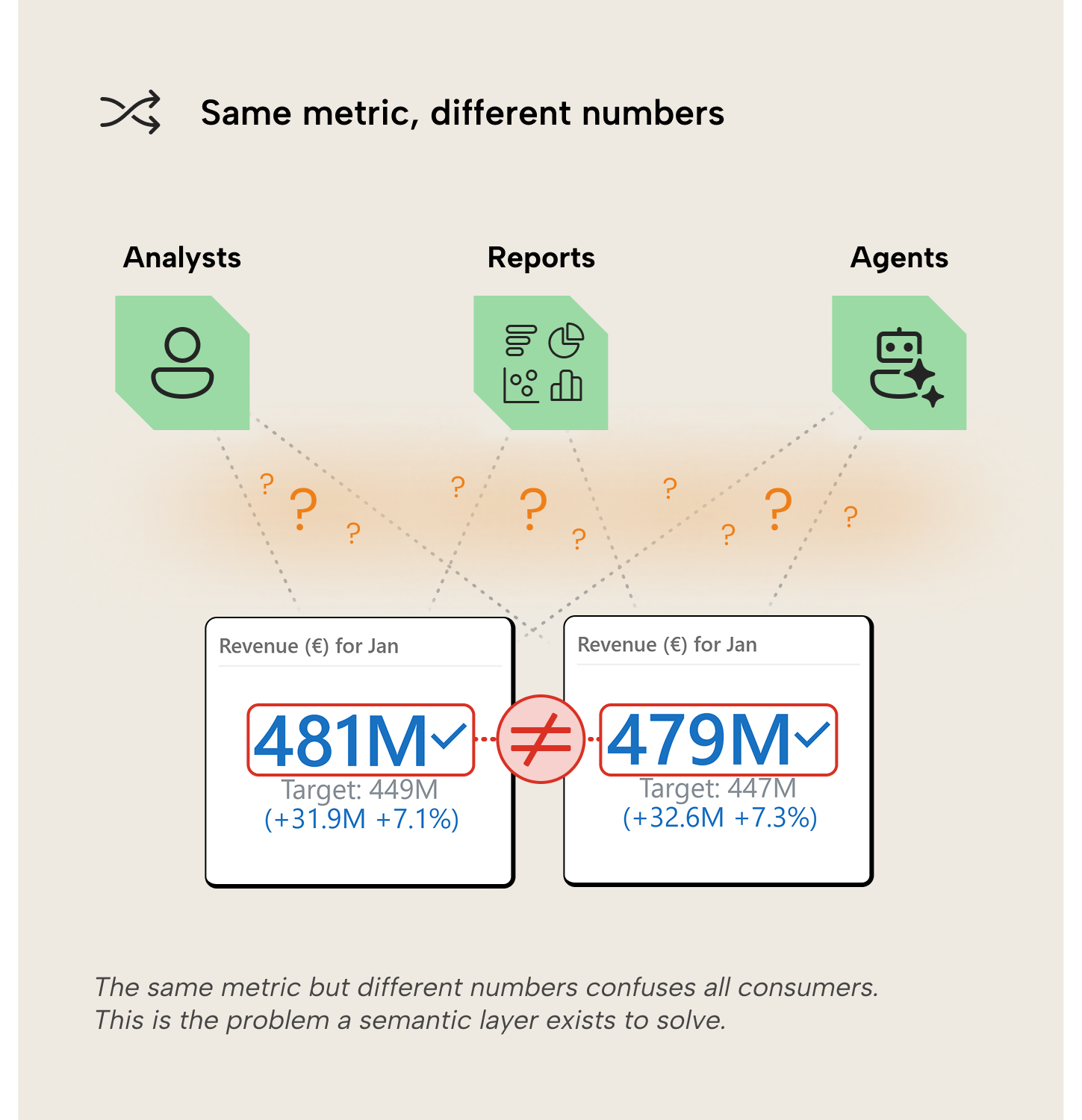

Before we define what a semantic layer is, it’s helpful to first highlight why it’s useful to have one. Many analytics teams hit (or have hit) the same wall at some point: inconsistent metrics. It often goes like this:

- Multiple teams build their own reports. They inevitably use the same metric names at some point, such as ‘Revenue’.

- Each team writes its own calculations—gross sales here, net of returns there, renewals included in one place but not the other.

- The ‘Revenue’ numbers in the finance teams’ reports do not match the ‘Revenue’ numbers in the sales teams’ reports. Every report looks right in isolation, but the organization cannot align on what the correct number is across reports.

- Teams must shift their focus from analyzing and automating to reconciling and integrating.

A semantic layer helps prevent this by centralizing business definitions. The same logic is defined once, documented, governed, and then reused everywhere, no matter which team or tool is asking the question. That includes AI agents—more on that later.

So what is a semantic layer?

A semantic layer is the part of a data stack that translates technical data structures into business terms. It sits between the data storage platform (commonly called “data warehouse” or “lakehouse”) and analytics tools, so analysts and business users can ask questions about data without rewriting logic every time. This definition is not entirely ours but a synthesis of Databricks, dbt, and IBM’s definitions. Microsoft doesn’t define the term “semantic layer” separately; they go straight to the implementation: semantic models.

Microsoft: semantic models in Power BI, Fabric, and Analysis Services

Microsoft’s semantic layer starts with semantic models (formerly “dataset”)—the same tabular model technology that powers Analysis Services. A semantic model layers business metrics, dimensions and calculation logic on top of data so reports and other tools can query them. DAX is the query language; VertiPaq handles in-memory storage; XMLA endpoints open the door to enterprise tooling and automation. Data in models can be accessed via VertiPaq in-memory cache or as a pass-through query to a source system with DirectQuery. Direct Lake, available in Fabric, is a third option that loads data from OneLake Delta tables into VertiPaq on demand.

Linking models across workspaces is possible through composite models, but carries significant limitations. In practice, semantic models tend to cover one or a handful of related business processes; decentralized. Teams can build their own model per domain or even per report. That flexibility is useful, but it increases the risk of definition drift unless models are intentionally treated as shared products with clear ownership, versioning, and re-use patterns. Dimensions like Customer or Product show up in many models, each with its own copy and its own definition. The inconsistency that a semantic layer is supposed to prevent at the report level can reappear at the model level.

Microsoft’s answer is a layer above the models: the Ontology. It defines re-usable business concepts (entity types like Customer, Order, Product), their properties, relationships, and business rules, and binds them to actual data sources—semantic models, lakehouse tables, eventhouse streams. The intent is to standardize business concepts across models and sources, so both humans and AI agents can reuse them consistently. Graph queries traverse the relationships defined in the Ontology, pulling data from multiple bound sources (e.g. Customer → Order → Product to calculate ‘Revenue’).

Microsoft’s semantic layer operates at two levels: semantic models for per-domain business logic, and the Ontology in Fabric IQ for cross-source standardization. Semantic models are mature and widely adopted; Ontologies are ambitious but still new; in public preview.

Databricks

Databricks implements its semantic layer through Unity Catalog, a unified governance layer for data and AI assets. The core construct is the metric view: a YAML-defined object that specifies measures, dimensions, and source tables. Metric views are queried with SQL using the measure function. Tools where analysts write their own SQL—notebooks, SQL editors—can consume them directly. Tools that generate SQL, like BI platforms, need to support the same measure function to query metric views correctly.

The philosophy is SQL-first and close to the data. That makes the semantic layer in Databricks less of a separate product and more a governed metadata layer on top of the lakehouse. For organizations using both Databricks and Power BI, Tabular Editor’s Semantic Bridge allows a user to import the structure and metadata of a metric view into tabular model objects: tables, columns, measures, and relationships. Business logic defined once in Unity Catalog flows into Power BI semantic models without re-implementation. ‘Revenue’ in Databricks and ‘Revenue’ in Power BI have the same definition, and return the same number.

Tableau

Tableau has historically embedded its semantic layer in the data model and calculated fields within workbooks. The model defines relationships, dimensions, and measures that feed directly into the visual layer. Intuitive for analysts, but definitions live inside individual workbooks—duplicated per report rather than shared and governed. Where Power BI risks definition drift across semantic models, Tableau risks it across workbooks: the same logic re-implemented by each analyst who builds a new view.

Salesforce is working to change this with Tableau Semantics, a more centralized approach to metric definitions that connects into the broader Data Cloud. The direction mirrors what Microsoft and Databricks are pursuing, moving definitions out of individual workbooks and into a governed layer.

Palantir

Palantir’s Foundry platform centers on the Ontology—a layer that models real-world objects (customers, orders, equipment), their properties, relationships, and actions. Where the other platforms’ semantic layers define metrics and dimensions for analytical consumption, Palantir’s Ontology also models what objects do.

An order can trigger a workflow, update its status, or escalate an exception. It covers operational logic alongside analytical queries; its scope is wider than the other platforms’ semantic layers, which is presumably why Palantir uses the term “ontology” rather than “semantic layer”.

That wider scope comes with more complexity. For organizations whose analytical and operational workflows are tightly coupled, the Ontology can consolidate what would otherwise be separate systems. For teams that primarily need governed metrics for reporting, it may be more architecture than the problem requires.

The semantic layer in the age of AI agents

The problem of inconsistent metrics gets worse when AI agents enter the picture. A human analyst who has worked with the organization’s data has absorbed the business logic through experience. When a revenue number looks off, they can often tell why—a regional filter wasn’t applied, a product line was excluded—just by the magnitude of the discrepancy, and they debug with a clear direction. An AI agent has none of that institutional memory. The data doesn’t have to be dirty for it to fail; clean tables with accurate numbers still produce wrong results when the agent lacks context to pick the right ones. It finds a column or measure called ‘Revenue’, computes, and moves on. If that happens to be the wrong ‘Revenue’—gross instead of net, renewals included where they shouldn’t be—the AI agent builds on the error without second-guessing, compounding into a result that’s no longer recognizably flawed, but garbled nonsense. Unlike a human analyst who would hesitate, the agent serves that nonsense fast, with confidence, at scale.

This is where the semantic layer earns its keep more than ever: by making implicit knowledge explicit. The business context that experienced analysts carry in their heads gets encoded in a layer that every consumer can read. That’s useful for new analysts on day one, for future you revisiting the model in six months, and for the LLM that has no knowledge of your revenue logic because it’s not in the training data and (hopefully) not on the public web. The AI agent doesn’t have to guess what rev_net_adj_q3 means because its meaning is contained in the semantic layer.

Every major platform now has an AI consumer that leans on the semantic layer. In Power BI and Fabric, Copilot uses the semantic model as grounding data—fetching the schema, descriptions, linguistic schema, and other model metadata to interpret a user’s natural-language question. Hidden fields and tables stay out of Copilot’s reach, so model authors control what Copilot gets to work with. That makes model quality the bottleneck: if names are vague, descriptions empty, and synonyms missing, Copilot’s answers will reflect that lack of context. The Prep for AI feature helps by letting developers select which fields Copilot can see, define verified answers, and add contextual instructions. At the Ontology level, Fabric data agents and operations agents use the Ontology as a guiding layer to ensure metrics remain consistent regardless of which underlying sources contain the data.

The pattern repeats across platforms. On Databricks, AI/BI Genie translates natural language questions into SQL using Unity Catalog metadata, metric view definitions and analyst-provided instructions. Since October 2025, metric views also support semantic metadata (display formats, descriptions) that travel with the metric. A format defined on ‘Revenue’ follows it wherever it’s consumed. Tableau’s AI assistants ground their responses in Tableau Semantics definitions, the same governed metrics that feed dashboards. Palantir's Artificial Intelligence Platform agents reason over the Ontology, working from the same object definitions, relationships, and business rules that drive operational workflows.

Regardless of flavor, the agent is bounded by what’s in the model—it can only work with the definitions the layer provides. That’s on purpose. Without a governed layer to attach meaning to numbers, every agent invents its own definition. This is the same problem that had teams reconciling drifted numbers by hand, except now the disagreement can spread at machine speed.

Further recommended reading

- Transactive Memory: A Contemporary Analysis of the Group Mind (Daniel M. Wegner, 1987). A shared directory of “who knows what” lets groups access more knowledge than any individual holds. The parallel to a semantic layer is clear; both externalize meaning so the group or organization doesn’t depend on whoever happens to remember.

- BI by Another Name (Benn Stancil, 2023). Even if a semantic layer encodes business logic well, it can–by nature—feel like a constraint to genuine analytical exploration. This article frames the problem honestly and doesn’t pretend to solve it—neither do we, for that matter.

In conclusion

The semantic layer is a concept, and the name each platform gives matters less than what you put in it. The hard work is the same everywhere: deciding what your metrics mean, writing those definitions down, governing them, and making them available to everyone who needs it. That work has always been worth doing well.

With AI agents increasingly consuming business data, the debt of skipping it will only compound further and faster. It’s no coincidence that all the major players mentioned in this article use the semantic layer as the basis for AI consumption of analytical data. Generative AI gives analytics a longer lever. To hold this sort of power, we need stronger foundations to support the speed, scale, and pressure that AI agents put on analytics processes. The semantic layer is that strong foundation.